Open source is about to get a lot messier. Not because the code is getting worse – because AI agents just changed the economics of “fork it and fix it yourself.”

Here’s the thing. For twenty-plus years, the unwritten social contract of open source was roughly: if you find a bug, file an issue; if you’re feeling ambitious, send a PR; be patient, maintainers are volunteers. That worked because patching someone else’s unfamiliar codebase was hard. Reading the code, understanding the conventions, writing a fix that didn’t break anything else – that was real work. Most users never tried. They filed the issue and waited.

That friction is gone.

The New Default: Fork, Prompt, Ship

Think about what happens now. You hit a bug in some library you depend on. The upstream maintainer is busy, or the project has 400 open issues, or the maintainer has strong opinions about the shape of the fix. Ten years ago you’d work around it. Five years ago you’d file a detailed issue and maybe submit a PR after a weekend.

Today? You fork it, open Claude Code (or Cursor, or whatever your agent of choice is), and say “this repo has a bug where X happens – investigate and fix it.” Twenty minutes later you have a working patch. You merge it into your fork. You ship.

Do you send the patch upstream? Maybe. But here’s where it gets interesting.

The AI Hesitation Problem

A growing number of OSS projects have explicit policies – or strong cultural signals – against AI-generated contributions. Some are worried about licensing. Some are worried about code quality. Some are just philosophically opposed. I don’t want to litigate whether those positions are right (that’s a topic for another day), but they’re real.

So you, the person who just fixed the bug, now face a choice:

- Submit the PR, maybe disclose the AI assist, and watch it sit for six months while someone decides how to feel about it

- Submit the PR, don’t disclose, hope nobody notices, and feel slightly dirty about it

- Don’t submit. Keep the fix in your fork. Move on with your life.

Guess which one a lot of people are going to pick?

Hoo boy. I can already tell you.

Fragmentation at Scale

Multiply this across millions of repos and tens of millions of developers now wielding capable agents. The math is not subtle:

- Forks proliferate

- Divergence accelerates

- Upstream becomes one of many viable versions, not the version

- Finding the “right” fork for your use case becomes a research project

We’ve had fragmentation before. Linux distributions, OpenSSL forks after Heartbleed (here’s the Heartbleed backstory if you need a refresher), the node/io.js split. But those were episodic and driven by specific events.

One repo. A thousand futures.

Think about that for a minute.

Yes, the Naysayers Are Right (Sort-of)

Don’t get me wrong. There are real concerns here.

AI-generated patches can be subtly wrong. They can miss context the agent didn’t load. They can pass tests while breaking behavior nobody wrote tests for. Maintainers who are cautious about accepting these patches aren’t being Luddites – they’re protecting a codebase they’ve poured years into.

BOTH can be true. The speed advantage is real AND the quality risk is real. Pretending either one doesn’t exist is baloney.

But the economic pressure doesn’t care about our philosophical preferences. The fix is cheap now. Waiting is expensive. Users will route around the friction. They always do.

A Proposal: Make Forks Legible

So what do we do about it? My early thinking:

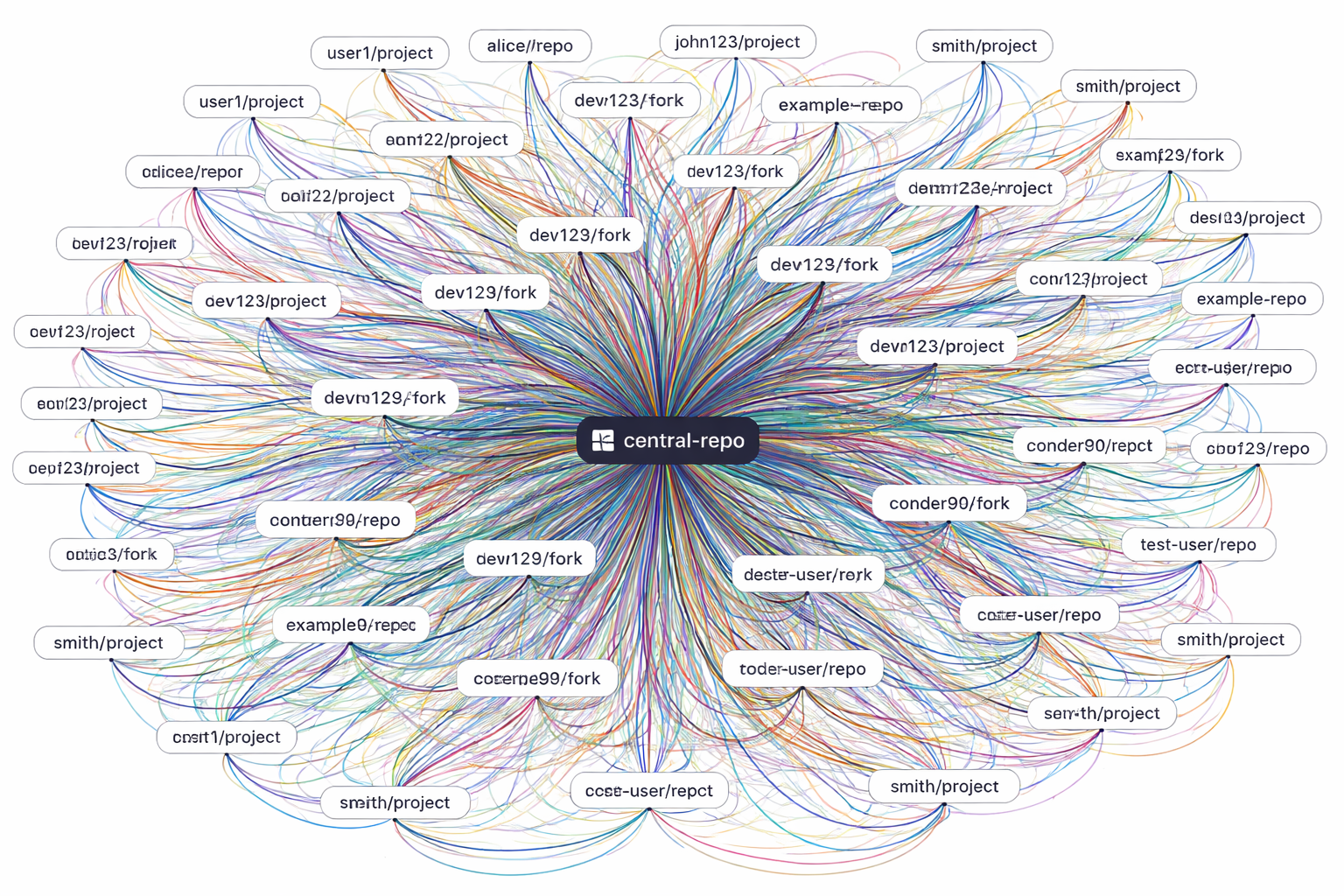

We need a tool that treats the entire fork graph of a project as a first-class thing. Not “the upstream repo with some forks listed in a tab you never click on,” but a real, queryable view of:

- Every active downstream fork of a given project

- The diffs between those forks and upstream (and each other)

- The activity signal on each fork – commits, issues, downloads, stars over time

- An estimate of how much AI assist went into each fork’s changes

- A point-in-time snapshot of all of this, so you can see how the landscape shifted

That data wants to live somewhere. Probably a data store keyed by project, updated on some cadence, with history preserved so you can ask “what did the fork landscape look like for this project three months ago?”

Then – and this is the part I’m actually excited about – you hand the user a prompt interface. “I need a version of libfoo that fixes the TLS renegotiation bug, is actively maintained, and I don’t care whether AI wrote the patches as long as the test suite passes.” Out comes a recommendation: here’s the fork that best matches your constraints.

It’s a search engine for the fork graph, weighted by your own tolerance for the tradeoffs.

The Meta-Repo

Now let’s extend it.

Imagine a “meta-repo” – a template GitHub repo that captures the above analysis for a specific project or set of projects. Anyone can clone the template and instantiate their own meta-repo pointed at the dependencies they care about. The meta-repo runs the analysis, writes the index, keeps it updated, and exposes a set of pre-built user profiles:

- “No AI” – only surface forks that disclose zero AI involvement

- “AI with human review” – forks where humans have signed off on the AI changes

- “Don’t care if tests pass” – forks with full automated test coverage, AI or not

The data lives in the meta-repo itself. It’s version controlled. It’s forkable. It’s transparent. You can argue with someone else’s classification by sending a PR. The whole thing is open source, turtles all the way down.

This is the kind of thing I’d normally call overkill. But the fragmentation problem is coming whether we build tools for it or not. Better to have the infrastructure ready.

Echoes from the Submarine

USS Richard B. Russell (SSN-687) in San Francisco Bay, circa 1990

I keep thinking about how the Navy handled configuration management on a nuclear submarine. Every piece of equipment had an identified configuration state. Every change was tracked. Every deviation from the baseline was documented and justified. You knew, exactly, what version of everything was on your boat, because you had to. The stakes weren’t a failed unit test. The stakes were the loss of a billion-dollar submarine, a reactor casualty that contaminates a harbor for a generation or more, a crew of 120+ never coming home – or worse, an incident in the wrong waters at the wrong time that starts a shooting war with another nuclear power. That’s not hyperbole. That was any given Tuesday.

Open source hasn’t needed more change tracking rigor across forks because the pace of change was human-scale. And the social norms of those humans prefered that folks upstream their work. Agents break that assumption. We’re about to have a surge of upstream and downstream forks that none of our current tooling was designed for.

The boat survived by making state legible. We need to do the same for the fork graph.

What’s Next

I don’t have this built yet. I’m noodling on it. The hard parts are:

- Estimating AI assist – there’s no reliable signal. Commit metadata, PR descriptions, and commit message patterns are all gameable

- Activity weighting – stars are noise, commits can be churn, the real signal is hard to define

- Keeping the data fresh – the GitHub API has rate limits, and the fork graph for a big project is enormous

None of these are blockers. They’re just the interesting problems.

If you’re thinking about the same things – or if you’ve already built something in this space – drop me a note. I’d rather steal a good idea than reinvent a mediocre one.

The fragmentation is coming. The question is whether we build the map before or after we get lost.

Parting Thought

It’s a whole different topic of attribution and authorship - and licensing. That’s a topic for another post. But it’s just as real, and probably has a much larger impact sooner. Stay tuned.