Think self-driving technology requires deep pockets to play with? Think again. AWS DeepRacer and Autoware are the leading edges of self-driving automation for the maker.

TL;DR

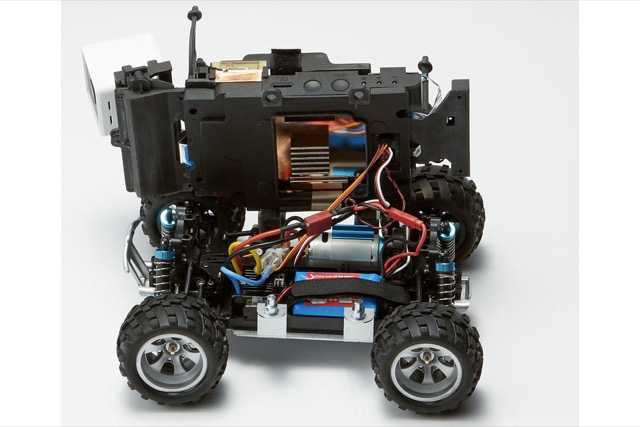

AWS DeepRacer is a low-cost, ROS-based robotic card that uses machine learning (reinforcement learning) and it’s camera-input to drive courses it’s been “trained” to drive. With the online simulator, this tool can get you started developing self-driving cars for under $400.

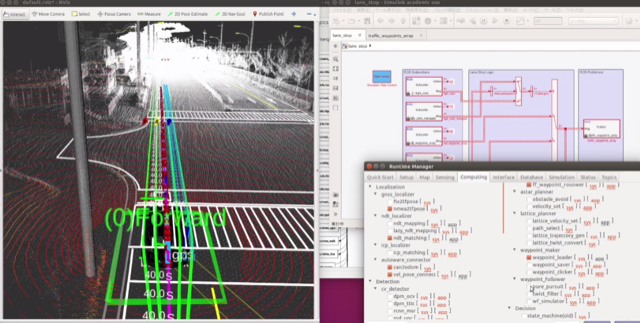

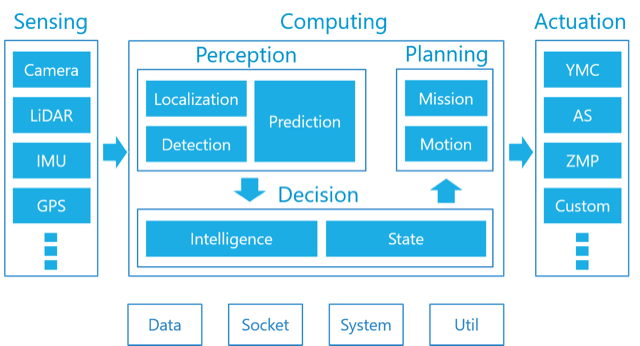

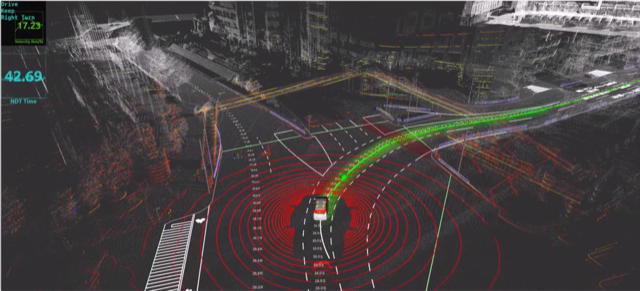

Autoware is the world’s first “All-in-One” open-source software for autonomous driving technology. It is based on ROS 1 and available under the Apache 2.0 license on github. It requires 8 cores and 32GB of RAM so it’s not going to run on a Raspberry Pi. It supports camera, LIDAR, IMU and GPS inputs. Those compute and sensor requirements are going to be a lot more than the DeepRacer, but I’m guessing you could build something in the $1000 range that works.

The important thing is that there’s a starting point for the platform.

Summary

Here in San Francisco we see self-driving enabled cars on the road a lot. Many of them are annoying as hell since they make driving decisions much more slowly than humans do. Geeks among us think “damn, how hard is really to decide the cross-walk is empty!” It’s easy to think that you need the cash of a VC-backed startup to really start playing with that tech. Not so. It’s still pricey: $400 for the DeepRacer and likely $1000-1500 for a proper Autoware robot. But the tooling is there if you have a bit of money, at least some background in coding, and the time and energy to learn.

It’s ripe for Innovation!!!

Imagination

I have a DeepRacer and have another on order. I bought one within minutes of it being announced at AWS re:Invent (I blogged about that at the time). I have one sitting here in my home office and only lack the time to play with it. I got lucky enough to be invited by AWS to a four-hour solo-focus-session where an AWS CX person watched me unbox and learn to set it up. It was a bit strange to be under the microscope, but I think I gave them some good feedback and I got to keep the DeepRacer that I unboxed. Win-win!

One thing that I am extremely excited about is the fact that the DeepRacer tool is based on Robot Operating System - ROS. And it’s not all locked out either. AWS is not hiding the guts. It’s an Ubuntu board with and Atom CPU and 4GB of RAM (specs here). You can develop right on the car main node. The main code seems to be in python interfacing with ROS. So this seems extremely hackable. Hackaday is postulating alternative hardware even, so this ecosystem is about to explode.

In the last few days I came across Autoware. It’s non-trivial and is being used as the basis for commercial offerings already, so it’s real. It looks reasonably modularized. Being based on ROS is almost certainly all written in C++ so it’s probably reasonably performant.

Given the hardware requirements this is probably a bit harder to set up and the bug-a-boo will be I/O bandwidth I am sure. USB webcams are the obvious choice for cameras but can you do multiple cameras at once? May need USB3, which means you are not even going to use an Intel Nuc for this. The GPS is easy over USB, and you could make a USB adapter for a 9DoF IMU pretty easily. I was reading about a module that a guy built that did the sensor fusion on the board and had one-degree heading accuracy for under $75 if I recall. I’ll try to look that up and revise this post later with a link. You would think it would be the LIDAR that was the harder sensor to deal with, but there’s a lot of options for that. Adafruit sells an intersting scanning LIDAR for $114. Sparkfun has a non-rotating unit. as well as a rotating one, granted more expensive at $320. My point is that there are many options out there. You might have to tweak some of the Autoware code - or more likely, the underlying ROS libraries - to make it work but the products are there to be integrated.

The building blocks are there. Damn, I wish I had more time!